joshembling / laragenie

An AI bot made for the command line that can read and understand any codebase from your Laravel app.

Requires

- php: ^8.2

- illuminate/contracts: ^10.0|^11.0

- laravel/prompts: ^0.1.13

- openai-php/client: ^0.8.0

- openai-php/laravel: ^0.8.0

- probots-io/pinecone-php: ^1.0.1

- spatie/laravel-package-tools: ^1.14.0

Requires (Dev)

- laravel/pint: ^1.0

- nunomaduro/collision: ^7.8

- orchestra/testbench: ^8.8

- pestphp/pest: ^2.20

- pestphp/pest-plugin-arch: ^2.0

- pestphp/pest-plugin-laravel: ^2.0

README

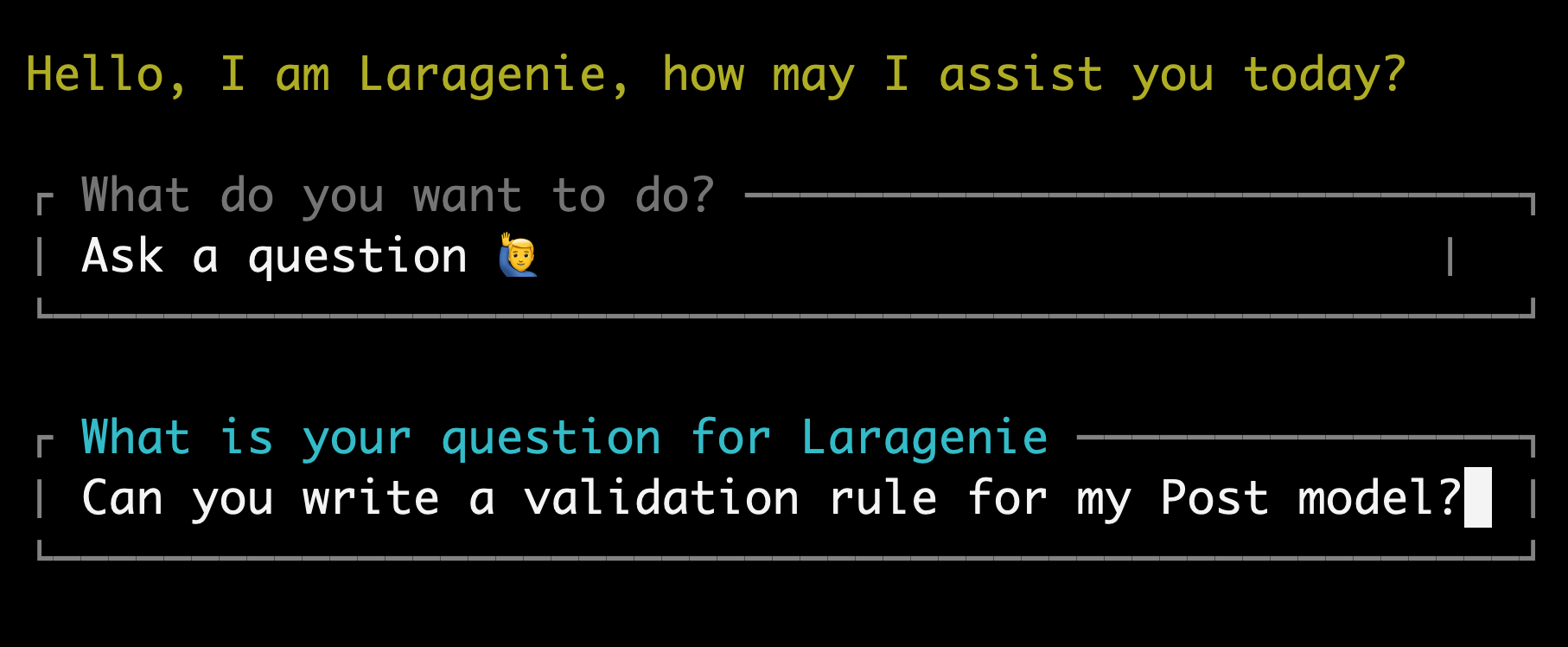

Laragenie is an AI chatbot that runs on the command line from your Laravel app. It will be able to read and understand any of your codebases following a few simple steps:

- Set up your env variables OpenAI and Pinecone

- Publish and update the Laragenie config

- Index your files and/or full directories

- Ask your questions

It's as simple as that! Accelerate your workflow instantly and collaborate seamlessly with the quickest and most knowledgeable 'colleague' you've ever had.

This is a particularly useful CLI bot that can be used to:

- Onboard developer's to new projects.

- Assist both junior and senior developers in understanding a codebase, offering a cost-effective alternative to multiple one-on-one sessions with other developers.

- Provide convenient and readily available support on a daily basis as needed.

You are not limited to indexing files based in your Laravel project. You can use this for monorepo's, or indeed any repo in any language. You can of course use this tool to index files that are not code-related also.

All you need to do is run this CLI tool from the Laravel directory. Simple, right?! 🎉

Note

If you are upgrading from a Laragenie version ^1.0.63 > 1.1, there is a change to Pinecone environment variables. Please see OpenAI and Pinecone.

Contents

- Requirements

- Installation

- Useage

- Debugging

- Changelog

- Contributing

- Security Vulnerabilities

- Credits

- Licence

Minimum Requirements

For specific versions that match your PHP, Laravel and Laragenie versions please see the table below:

| PHP | Laravel version | Laragenie version |

|---|---|---|

| ^8.1 | ^10.0 | >=1.0 <1.2 |

| ^8.2 | ^10.0, ^11.0 | ^1.2.0 |

This package uses Laravel Prompts which supports macOS, Linux, and Windows with WSL. Due to limitations in the Windows version of PHP, it is not currently possible to use Laravel Prompts on Windows outside of WSL.

For this reason, Laravel Prompts supports falling back to an alternative implementation such as the Symfony Console Question Helper.

Installation

You can install the package via composer:

composer require joshembling/laragenie

You can publish and run the migrations with:

php artisan vendor:publish --tag="laragenie-migrations"

php artisan migrate

If you don't want to publish migrations, you must toggle the database credentials in your Laragenie config to false. (See config file details below).

You can publish the config file with:

php artisan vendor:publish --tag="laragenie-config"

This is the contents of the published config file:

return [ 'bot' => [ 'name' => 'Laragenie', // The name of your chatbot 'welcome' => 'Hello, I am Laragenie, how may I assist you today?', // Your welcome message 'instructions' => 'Write in markdown format. Try to only use factual data that can be pulled from indexed chunks.', // The chatbot instructions ], 'chunks' => [ 'size' => 1000, // Maximum number of characters to separate chunks ], 'database' => [ 'fetch' => true, // Fetch saved answers from previous questions 'save' => true, // Save answers to the database ], 'extensions' => [ // The file types you want to index 'php', 'blade.php', 'js', ], 'indexes' => [ 'directories' => [], // The directores you want to index e.g. ['app/Models', 'app/Http/Controllers', '../frontend/src'] 'files' => [], // The files you want to index e.g. ['tests/Feature/MyTest.php'] 'removal' => [ 'strict' => true, // User prompt on deletion requests of indexes ], ], 'openai' => [ 'embedding' => [ 'model' => 'text-embedding-3-small', // Text embedding model 'max_tokens' => 5, // Maximum tokens to use when embedding ], 'chat' => [ 'model' => 'gpt-4-turbo-preview', // Your OpenAI GPT model 'temperature' => 0.1, // Set temperature between 0 and 1 (lower values will have less irrelevance) ], ], 'pinecone' => [ 'topK' => 2, // Pinecone indexes to fetch ], ];

Usage

OpenAI and Pinecone

OpenAI

This package uses OpenAI to process and generate responses and Pinecone to index your data.

You will need to create an OpenAI account with credits, generate an API key and add it to your .env file:

OPENAI_API_KEY=your-open-ai-key

Pinecone

Important

If you are using a Laragenie version prior to 1.1 and do not want to upgrade, go straight to Legacy Pinecone.

You will need to create a Pinecone account. There are two diferent types of account you can set up:

- Serverless

- Pod-based index

As of early 2024, Pinecone recommend you start with a serverless account. You can optionally set up an account with a payment method attached to get $100 in free credits, however, a free account allows up to 100,000 indexes - likely more than enough for any small-medium sized application.

Create an index with 1536 dimensions and the metric as 'cosine'. Then generate an api key and add these details to your .env file:

PINECONE_API_KEY=an-example-pinecone-api-key

PINECONE_INDEX_HOST='https://an-example-url.aaa.gcp-starter.pinecone.io'

Your host can be seen in the information box on your index page, alongside the metric, dimensions, pod type, cloud, region and environment.

Tip

If you are upgrading to Laragenie ^1.1, you can safely remove the legacy environment variables: PINECONE_ENVIRONMENT and PINECONE_INDEX.

Legacy Pinecone

Important: If you are using Laragenie 1.0.63 or prior, you must use a regular Pinecone account and NOT a serverless account. When you are hinted to select an option on account creation, ensure you select 'Continue with pod-based index'.

Create an environment with 1536 dimensions and name it, generate an api key and add these details to your .env file:

PINECONE_API_KEY=your-pinecone-api-key

PINECONE_ENVIRONMENT=gcp-starter

PINECONE_INDEX=your-index

Running Laragenie on the command line

Once these are setup you will be able to run the following command from your root directory:

php artisan laragenie

You will get 4 options:

- Ask a question

- Index files

- Remove indexed files

- Something else

Use the arrow keys to toggle through the options and enter to select the command.

Ask a question

Note: you can only run this action once you have files indexed in your Pinecone vector database (skip to the ‘Index Files’ section if you wish to find out how to start indexing).

When your vector database has indexes you’ll be able to ask any questions relating to your codebase.

Answers can be generated in markdown format with code examples, or any format of your choosing. Use the bot.instructions config to write AI instructions as detailed as you need to.

Beneath each response you will see the generated cost (in US dollars), which will help keep close track of the expense. Cost of the response is added to your database, if migrations are enabled.

Costs can vary, but small responses will be less than $0.01. Much larger responses can be between $0.02–0.05.

Force AI

As previously mentioned, when you have migrations enabled your questions will save to your database.

However, you may want to force AI usage (prevent fetching from the database) if you are unsatisfied with the initial answer. This will overwrite the answer already saved to the database.

To force an AI response, you will need to end all questions with an --ai flag e.g.

Tell me how users are saved to the database --ai.

This will ensure the AI model will re-assess your request, and outputs another answer (this could be the same answer depending on the GPT model you are using).

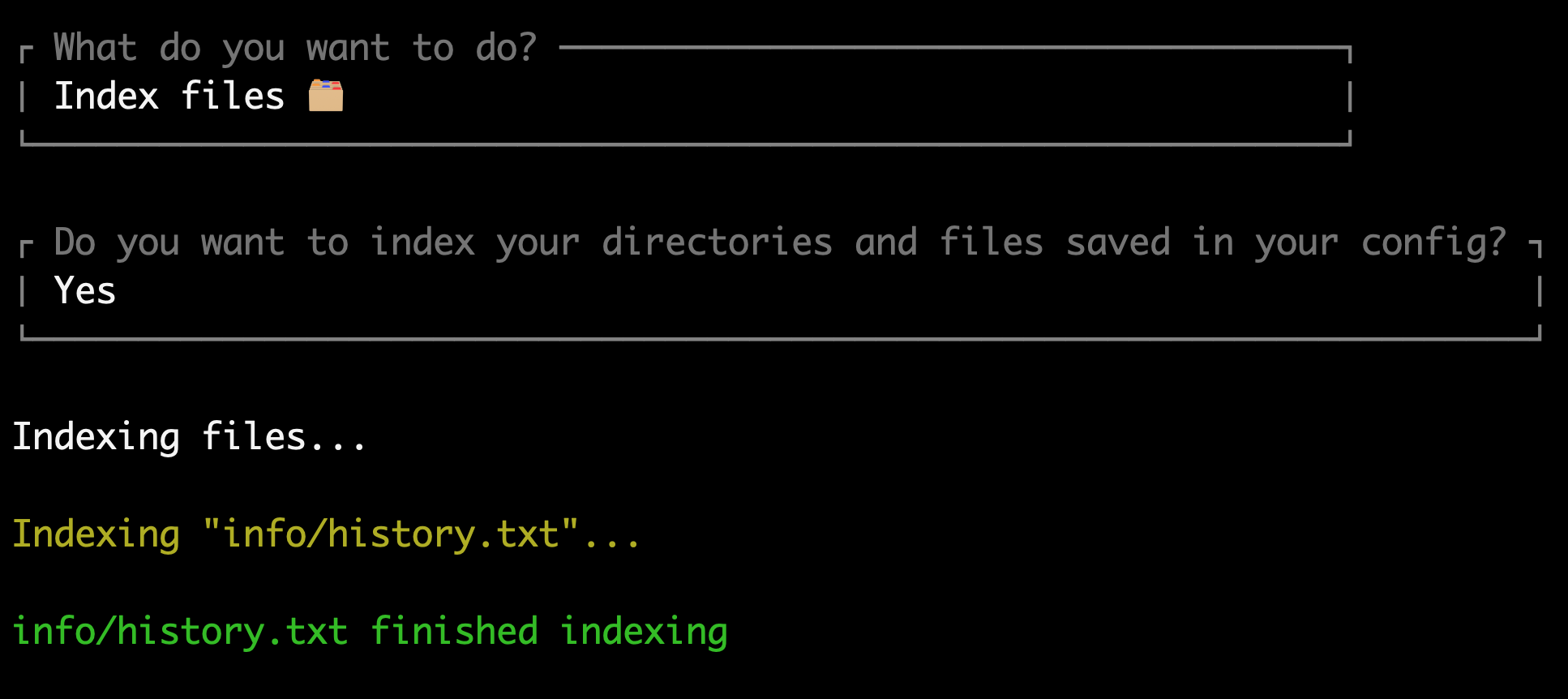

Index files

The quickest way to index files is to pass in singular values to the directories or files array in the Laragenie config. When you run the 'Index Files' command you will always have the option to reindex these files. This will help in keeping your Laragenie bot up to date.

Select 'yes', when prompted with Do you want to index your directories and files saved in your config?

'indexes' => [ 'directories' => ['app/Models', 'app/Http/Controllers'], 'files' => ['tests/Feature/MyTest.php'], 'removal' => [ 'strict' => true, ], ],

If you select 'no', you can also index files in the following ways:

- Inputting a file name with it's namespace e.g.

app/Models/User.php - Inputting a full directory, e.g.

App- If you pass in a directory, Laragenie can only index files within this directory, and not its subdirectories.

- To index subdirectories you must explicitly pass the path e.g.

app/Modelsto index all of your models

- Inputting multiple files or directories in a comma separated list e.g.

app/Models, tests/Feature, app/Http/Controllers/Controller.php - Inputting multiple directories with wildcards e.g.

app/Models/*.php- Please note that the wildcards must still match the file extensions in your

laragenieconfig file.

- Please note that the wildcards must still match the file extensions in your

Indexing files outside of your Laravel project

You may use Laragenie in any way that you wish; you are not limited to just indexing Laravel based files.

For example, your Laravel project may live in a monorepo with two root entries such as frontend and backend. In this instance, you could move up one level to index more directories and files e.g. ../frontend/src/ or ../frontend/components/Component.js.

You can add these to your directories and files in the Laragenie config:

'indexes' => [ 'directories' => ['app/Models', 'app/Http/Controllers', '../frontend/src/'], 'files' => ['tests/Feature/MyTest.php', '../frontend/components/Component.js'], 'removal' => [ 'strict' => true, ], ],

Using this same method, you could technically index any files or directories you have access to on your server or local machine.

Ensure your extensions in your Laragenie config match all the file types that you want to index.

'extensions' => [ 'php', 'blade.php', 'js', 'jsx', 'ts', 'tsx', // etc... ],

Note: if your directories, paths or file names change, Laragenie will not be able to find the index if you decide to update/remove it later on (unless you truncate your entire vector database, or go into Pinecone and delete them manually).

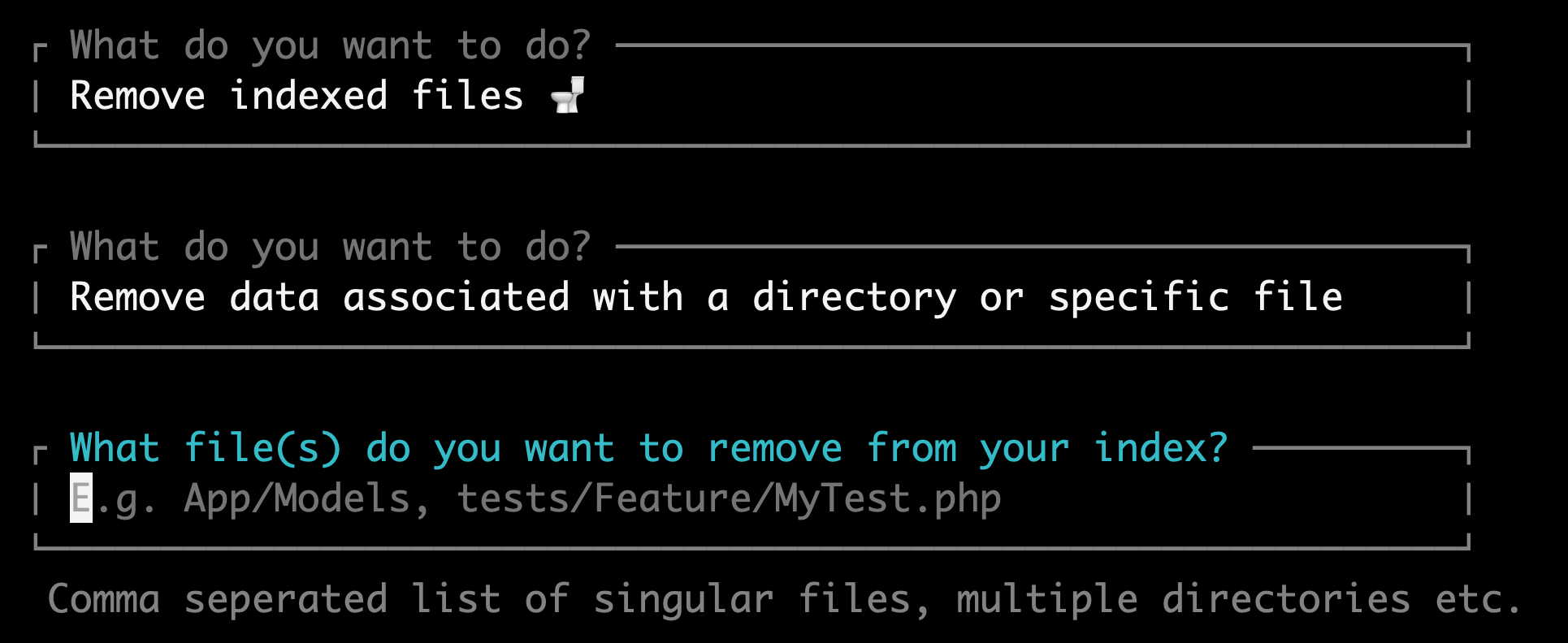

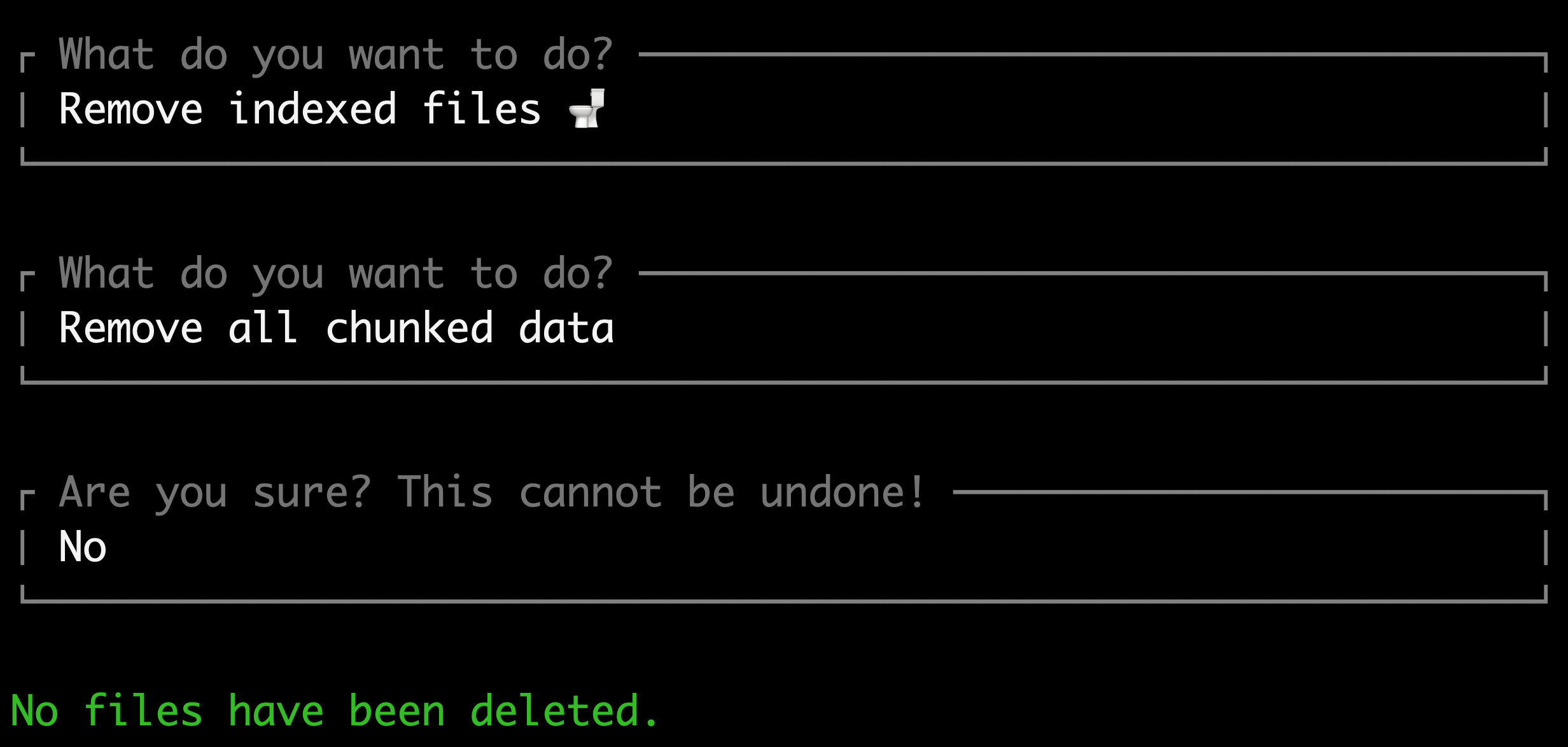

Removing indexed files

You can remove indexed files using the same methods listed above, except from using your directories or files array in the Laragenie config - this is currently for indexing purposes only.

If you want to remove all files you may do so by selecting Remove all chunked data. Be warned that this will truncate your entire vector database and cannot be reversed.

To remove a comma separated list of files/directories, select the Remove data associated with a directory or specific file prompt as an option.

Strict removal, i.e. warning messages before files are removed, can be turned on/off by changing the 'strict' attribute to false in your config.

'indexes' => [ 'removal' => [ 'strict' => true, ], ],

Stopping Laragenie

You can stop Laragenie using the following methods:

ctrl + c(Linux/Mac)- Selecting

No thanks, goodbyein the user menu after at least 1 prompt has run.

Have fun using Laragenie! 🤖

Debugging

API Keys

- If you have correctly added the required

.envvariables, but get an error such as "You didn't provide an API key", you may need to clear your cache and config:

php artisan config:clear php artisan cache:clear

- Likewise, if you get a 404 response and a Saloon exception when trying any of the four options, it's likely you do not have a non-serverless Pinecone database set up and are using a Laragenie version prior to 1.1. Please see OpenAI and Pinecone.

Changelog

Please see CHANGELOG for more information on what has changed recently.

Contributing

Please see CONTRIBUTING for details.

Security Vulnerabilities

Please review our security policy on how to report security vulnerabilities.

Credits

License

The MIT License (MIT). Please see License File for more information.