google-gemini-php / client

Gemini API is a supercharged PHP API client that allows you to interact with the Gemini API

Requires

- php: ^8.1.0

- php-http/discovery: ^1.19.2

- psr/http-client-implementation: *

- psr/http-factory-implementation: *

Requires (Dev)

- guzzlehttp/guzzle: ^7.8.1

- guzzlehttp/psr7: ^2.6.2

- laravel/pint: ^1.18.1

- mockery/mockery: ^1.6.7

- pestphp/pest: ^2.32.3

- phpstan/phpstan: ^2.1.32

Suggests

- ext-fileinfo: Reads upload file size and mime type if not provided

- dev-main

- 2.7.4

- 2.7.3

- 2.7.2

- 2.7.1

- 2.7.0

- 2.6.0

- 2.5.0

- 2.4.0

- 2.3.1

- 2.3.0

- 2.2.0

- 2.1.2

- 2.1.1

- 2.1.0

- 2.0.0

- 1.0.15

- 1.0.14

- 1.0.13

- 1.0.12

- 1.0.11

- 1.0.10

- 1.0.9

- 1.0.8

- 1.0.7

- 1.0.7-beta

- 1.0.6

- 1.0.6-beta

- 1.0.5

- 1.0.5-beta

- 1.0.4

- 1.0.4-beta

- 1.0.3

- 1.0.3-beta

- 1.0.2

- 1.0.2-beta

- 1.0.1

- 1.0.1-beta

- 1.0.0

- 1.0.0-beta

- dev-copilot/fix-gemini-class-namespace

- dev-copilot/fix-stream-generate-content-issue

- dev-tts-docs

- dev-111-function-call-with-no-args

- dev-beta

This package is not auto-updated.

Last update: 2026-05-18 19:26:39 UTC

README

Gemini PHP is a community-maintained PHP API client that allows you to interact with the Gemini AI API.

- Fatih AYDIN github.com/aydinfatih

- Vytautas Smilingis github.com/Plytas

Table of Contents

- Prerequisites

- Setup

- Usage

- Chat Resource

- Text-only Input

- Text-and-image Input

- Text-and-video Input

- Image Generation

- Multi-turn Conversations (Chat)

- Chat with Streaming

- Stream Generate Content

- Structured Output

- Function calling

- Code Execution

- Grounding with Google Search

- Grounding with Google Maps

- Grounding with File Search

- System Instructions

- Speech generation

- Thinking Mode

- Count tokens

- Configuration

- File Management

- Cached Content

- File Search Stores

- File Search Documents

- Embedding Resource

- Models

- Chat Resource

- Troubleshooting

- Testing

Prerequisites

To complete this quickstart, make sure that your development environment meets the following requirements:

- Requires PHP 8.1+

Setup

Installation

First, install Gemini via the Composer package manager:

composer require google-gemini-php/client

Ensure that the php-http/discovery composer plugin is allowed to run or install a client manually if your project does not already have a PSR-18 client integrated.

composer require guzzlehttp/guzzle

Setup your API key

To use the Gemini API, you'll need an API key. If you don't already have one, create a key in Google AI Studio.

Upgrade to 2.0

Starting 2.0 release this package will work only with Gemini v1beta API (see API versions).

To update, run this command:

composer require google-gemini-php/client:^2.0

This release introduces support for new features:

- Structured output

- System instructions

- File uploads

- Function calling

- Code execution

- Grounding with Google Search

- Cached content

- Thinking model configuration

- Speech model configuration

- URL context retrieval

\Gemini\Enums\ModelType enum has been deprecated and will be removed in next major version. Together with this $client->geminiPro() and $client->geminiFlash() methods have been deprecated as well.

We suggest using $client->generativeModel() method and pass in the model string directly. All methods that had previously accepted ModelType enum now accept a BackedEnum. We recommend implementing your own enum for convenience.

There may be other breaking changes not listed here. If you encounter any issues, please submit an issue or a pull request.

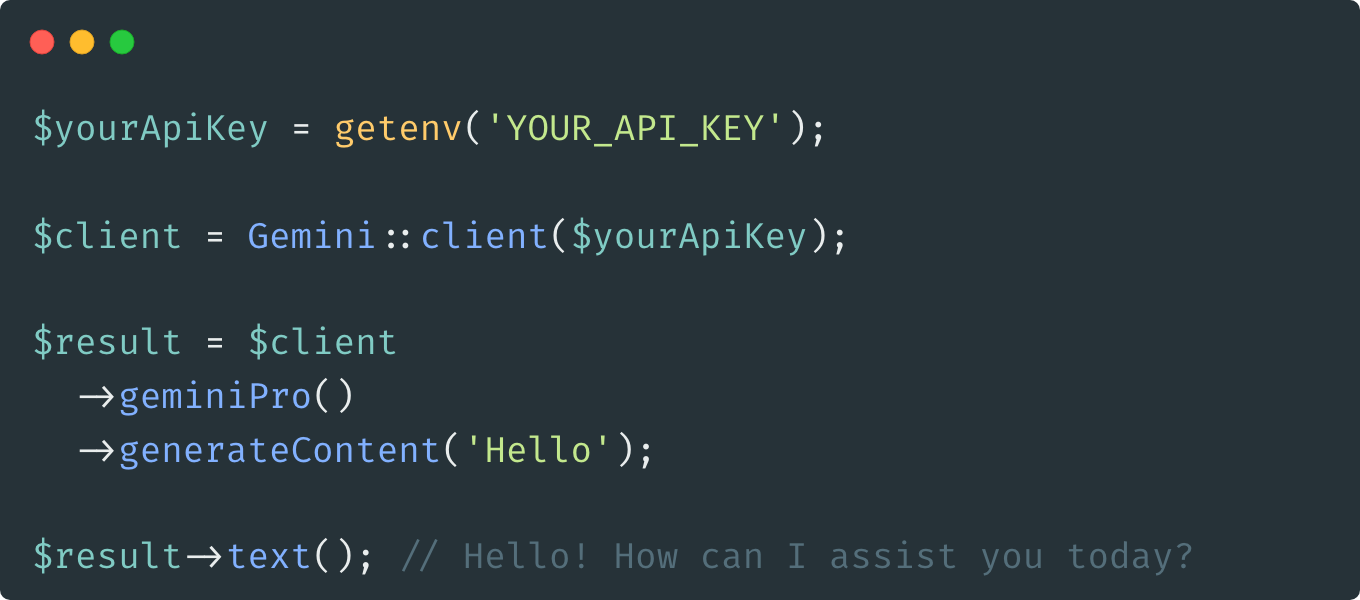

Usage

Interact with Gemini's API:

use Gemini\Enums\ModelVariation; use Gemini\GeminiHelper; use Gemini; $yourApiKey = getenv('YOUR_API_KEY'); $client = Gemini::client($yourApiKey); $result = $client->generativeModel(model: 'gemini-2.0-flash')->generateContent('Hello'); $result->text(); // Hello! How can I assist you today? // Helper method usage $result = $client->generativeModel( model: GeminiHelper::generateGeminiModel( variation: ModelVariation::FLASH, generation: 2.5, version: "preview-04-17" ), // models/gemini-2.5-flash-preview-04-17 ); $result->text(); // Hello! How can I assist you today?

If necessary, it is possible to configure and create a separate client.

use Gemini; $yourApiKey = getenv('YOUR_API_KEY'); $client = Gemini::factory() ->withApiKey($yourApiKey) ->withBaseUrl('https://generativelanguage.example.com/v1beta') // default: https://generativelanguage.googleapis.com/v1beta/ ->withHttpHeader('X-My-Header', 'foo') ->withQueryParam('my-param', 'bar') ->withHttpClient($guzzleClient = new \GuzzleHttp\Client(['timeout' => 30])) // default: HTTP client found using PSR-18 HTTP Client Discovery ->withStreamHandler(fn(RequestInterface $request): ResponseInterface => $guzzleClient->send($request, [ 'stream' => true // Allows to provide a custom stream handler for the http client. ])) ->make();

Chat Resource

For a complete list of supported input formats and methods in Gemini API v1, see the models documentation.

Text-only Input

Generate a response from the model given an input message.

use Gemini; $yourApiKey = getenv('YOUR_API_KEY'); $client = Gemini::client($yourApiKey); $result = $client->generativeModel(model: 'gemini-2.0-flash')->generateContent('Hello'); $result->text(); // Hello! How can I assist you today?

Text-and-image Input

Generate responses by providing both text prompts and images to the Gemini model.

use Gemini\Data\Blob; use Gemini\Enums\MimeType; $result = $client ->generativeModel(model: 'gemini-2.0-flash') ->generateContent([ 'What is this picture?', new Blob( mimeType: MimeType::IMAGE_JPEG, data: base64_encode( file_get_contents('https://storage.googleapis.com/generativeai-downloads/images/scones.jpg') ) ) ]); $result->text(); // The picture shows a table with a white tablecloth. On the table are two cups of coffee, a bowl of blueberries, a silver spoon, and some flowers. There are also some blueberry scones on the table.

Text-and-video Input

Process video content and get AI-generated descriptions using the Gemini API with an uploaded video file.

use Gemini\Data\UploadedFile; use Gemini\Enums\MimeType; $result = $client ->generativeModel(model: 'gemini-2.0-flash') ->generateContent([ 'What is this video?', new UploadedFile( fileUri: '123-456', // accepts just the name or the full URI mimeType: MimeType::VIDEO_MP4 ) ]); $result->text(); // The video shows...

Image Generation

Generate images from text prompts using the Imagen model.

use Gemini\Data\ImageConfig; use Gemini\Data\GenerationConfig; $imageConfig = new ImageConfig(aspectRatio: '16:9'); $generationConfig = new GenerationConfig(imageConfig: $imageConfig); $response = $client->generativeModel(model: 'gemini-2.5-flash-image') ->withGenerationConfig($generationConfig) ->generateContent('Draw a futuristic city'); // Save the image file_put_contents('image.png', base64_decode($response->parts()[0]->inlineData->data));

Multi-turn Conversations (Chat)

Using Gemini, you can build freeform conversations across multiple turns.

use Gemini\Data\Content; use Gemini\Enums\Role; $chat = $client ->generativeModel(model: 'gemini-2.0-flash') ->startChat(history: [ Content::parse(part: 'The stories you write about what I have to say should be one line. Is that clear?'), Content::parse(part: 'Yes, I understand. The stories I write about your input should be one line long.', role: Role::MODEL) ]); $response = $chat->sendMessage('Create a story set in a quiet village in 1600s France'); echo $response->text(); // Amidst rolling hills and winding cobblestone streets, the tranquil village of Beausoleil whispered tales of love, intrigue, and the magic of everyday life in 17th century France. $response = $chat->sendMessage('Rewrite the same story in 1600s England'); echo $response->text(); // In the heart of England's lush countryside, amidst emerald fields and thatched-roof cottages, the village of Willowbrook unfolded a tapestry of love, mystery, and the enchantment of ordinary days in the 17th century.

Chat with Streaming

You can also stream the response in a chat session. The history is automatically updated with the full response after the stream completes.

$chat = $client->generativeModel(model: 'gemini-2.0-flash')->startChat(); $stream = $chat->streamSendMessage('Hello'); foreach ($stream as $response) { echo $response->text(); }

Stream Generate Content

By default, the model returns a response after completing the entire generation process. You can achieve faster interactions by not waiting for the entire result, and instead use streaming to handle partial results.

$stream = $client ->generativeModel(model: 'gemini-2.0-flash') ->streamGenerateContent('Write long a story about a magic backpack.'); foreach ($stream as $response) { echo $response->text(); }

Structured Output

Gemini generates unstructured text by default, but some applications require structured text. For these use cases, you can constrain Gemini to respond with JSON, a structured data format suitable for automated processing. You can also constrain the model to respond with one of the options specified in an enum.

use Gemini\Data\GenerationConfig; use Gemini\Data\Schema; use Gemini\Enums\DataType; use Gemini\Enums\ResponseMimeType; $result = $client ->generativeModel(model: 'gemini-2.0-flash') ->withGenerationConfig( generationConfig: new GenerationConfig( responseMimeType: ResponseMimeType::APPLICATION_JSON, responseSchema: new Schema( type: DataType::ARRAY, items: new Schema( type: DataType::OBJECT, properties: [ 'recipe_name' => new Schema(type: DataType::STRING), 'cooking_time_in_minutes' => new Schema(type: DataType::INTEGER) ], required: ['recipe_name', 'cooking_time_in_minutes'], ) ) ) ) ->generateContent('List 5 popular cookie recipes with cooking time'); $result->json(); //[ // { // +"cooking_time_in_minutes": 10, // +"recipe_name": "Chocolate Chip Cookies", // }, // { // +"cooking_time_in_minutes": 12, // +"recipe_name": "Oatmeal Raisin Cookies", // }, // { // +"cooking_time_in_minutes": 10, // +"recipe_name": "Peanut Butter Cookies", // }, // { // +"cooking_time_in_minutes": 10, // +"recipe_name": "Snickerdoodles", // }, // { // +"cooking_time_in_minutes": 12, // +"recipe_name": "Sugar Cookies", // }, // ]

Function calling

Gemini provides the ability to define and utilize custom functions that the model can call during conversations. This enables the model to perform specific actions or calculations through your defined functions.

<?php use Gemini\Data\Content; use Gemini\Data\FunctionCall; use Gemini\Data\FunctionDeclaration; use Gemini\Data\FunctionResponse; use Gemini\Data\Part; use Gemini\Data\Schema; use Gemini\Data\Tool; use Gemini\Enums\DataType; use Gemini\Enums\Role; function handleFunctionCall(FunctionCall $functionCall): Content { if ($functionCall->name === 'addition') { return new Content( parts: [ new Part( functionResponse: new FunctionResponse( name: 'addition', response: ['answer' => $functionCall->args['number1'] + $functionCall->args['number2']], ), thoughtSignature: 'some-signature' // Optional: Required for some models (e.g. Gemini 3 Pro) ) ], role: Role::USER ); } //Handle other function calls } $chat = $client ->generativeModel(model: 'gemini-2.0-flash') ->withTool(new Tool( functionDeclarations: [ new FunctionDeclaration( name: 'addition', description: 'Performs addition', parameters: new Schema( type: DataType::OBJECT, properties: [ 'number1' => new Schema( type: DataType::NUMBER, description: 'First number' ), 'number2' => new Schema( type: DataType::NUMBER, description: 'Second number' ), ], required: ['number1', 'number2'] ) ) ] )) ->startChat(); $response = $chat->sendMessage('What is 4 + 3?'); if ($response->parts()[0]->functionCall !== null) { $thoughtSignature = $response->parts()[0]->thoughtSignature; // Access the thought signature $functionResponse = handleFunctionCall($response->parts()[0]->functionCall); $response = $chat->sendMessage($functionResponse); } echo $response->text(); // 4 + 3 = 7

Code Execution

Gemini models can generate and execute code automatically, and return the result to you. This is useful for tasks that require computation, data manipulation, or other programmatic operations.

use Gemini\Data\CodeExecution; use Gemini\Data\Tool; $response = $client ->generativeModel(model: 'gemini-2.0-flash') ->withTool(new Tool(codeExecution: CodeExecution::from())) ->generateContent('What is the sum of the first 50 prime numbers? Generate and run code for the calculation, and make sure you get all 50.'); // Access the executed code and results foreach ($response->parts() as $part) { if ($part->executableCode !== null) { echo "Language: " . $part->executableCode->language->value . "\n"; echo "Code: " . $part->executableCode->code . "\n"; } if ($part->codeExecutionResult !== null) { echo "Outcome: " . $part->codeExecutionResult->outcome->value . "\n"; echo "Output: " . $part->codeExecutionResult->output . "\n"; } }

Grounding with Google Search

Grounding with Google Search connects the Gemini model to real-time web content and works with all available languages. This allows Gemini to provide more accurate answers and cite verifiable sources beyond its knowledge cutoff.

For Gemini 2.0 and later models (Recommended):

Use the simple GoogleSearch tool which automatically handles search queries:

use Gemini\Data\GoogleSearch; use Gemini\Data\Tool; $response = $client ->generativeModel(model: 'gemini-2.0-flash') ->withTool(new Tool(googleSearch: GoogleSearch::from())) ->generateContent('Who won the Euro 2024?'); echo $response->text(); // Spain won Euro 2024, defeating England 2-1 in the final. // Access grounding metadata to see sources $groundingMetadata = $response->candidates[0]->groundingMetadata; if ($groundingMetadata !== null) { // Get the search queries that were executed foreach ($groundingMetadata->webSearchQueries ?? [] as $query) { echo "Search query: {$query}\n"; } // Get the web sources foreach ($groundingMetadata->groundingChunks ?? [] as $chunk) { if ($chunk->web !== null) { echo "Source: {$chunk->web->title} - {$chunk->web->uri}\n"; } } // Get grounding supports (links text segments to sources) foreach ($groundingMetadata->groundingSupports ?? [] as $support) { if ($support->segment !== null) { echo "Text segment: {$support->segment->text}\n"; echo "Supported by chunks: " . implode(', ', $support->groundingChunkIndices ?? []) . "\n"; } } }

Grounding with Google Maps

Grounding with Google Maps allows the model to utilize real-world geographical data. This enables more precise location-based responses, such as finding nearby points of interest.

use Gemini\Data\GoogleMaps; use Gemini\Data\RetrievalConfig; use Gemini\Data\Tool; use Gemini\Data\ToolConfig; $tool = new Tool( googleMaps: new GoogleMaps(enableWidget: true) ); $toolConfig = new ToolConfig( retrievalConfig: new RetrievalConfig( latitude: 40.758896, longitude: -73.985130 ) ); $response = $client ->generativeModel(model: 'gemini-2.0-flash') ->withTool($tool) ->withToolConfig($toolConfig) ->generateContent('Find coffee shops near me'); echo $response->text(); // (Model output referencing coffee shops)

Grounding with File Search

Grounding with File Search enables the model to retrieve and utilize information from your indexed files. This is useful for answering questions based on private or extensive document collections.

use Gemini\Data\FileSearch; use Gemini\Data\Tool; $tool = new Tool( fileSearch: new FileSearch( fileSearchStoreNames: ['files/my-document-store'], metadataFilter: 'author = "Robert Graves"' ) ); $response = $client ->generativeModel(model: 'gemini-2.0-flash') ->withTool($tool) ->generateContent('Summarize the document about Greek myths by Robert Graves'); echo $response->text(); // (Model output summarizing the document)

System Instructions

System instructions let you steer the behavior of the model based on your specific needs and use cases. You can set the role and personality of the model, define the format of responses, and provide goals and guardrails for model behavior.

use Gemini\Data\Content; $response = $client ->generativeModel(model: 'gemini-2.0-flash') ->withSystemInstruction( Content::parse('You are a helpful assistant that always responds in the style of a pirate. Use nautical terms and pirate slang in all your responses.') ) ->generateContent('Tell me about PHP programming'); echo $response->text(); // Ahoy there, matey! Let me tell ye about this fine treasure called PHP programming...

You can also combine system instructions with other features:

use Gemini\Data\Content; use Gemini\Data\GenerationConfig; use Gemini\Enums\ResponseMimeType; $response = $client ->generativeModel(model: 'gemini-2.0-flash') ->withSystemInstruction( Content::parse('You are a JSON API. Always respond with valid JSON objects. Be concise.') ) ->withGenerationConfig( new GenerationConfig(responseMimeType: ResponseMimeType::APPLICATION_JSON) ) ->generateContent('Give me information about the Eiffel Tower'); print_r($response->json());

Speech generation

Gemini allows generating speech from a text. To use that, make sure to use a model that supports this functionality. The model will output base64 encoded audio string.

Single speaker

use Gemini\Data\GenerationConfig; use Gemini\Data\SpeechConfig; use Gemini\Data\VoiceConfig; use Gemini\Data\PrebuiltVoiceConfig; use Gemini\Enums\ResponseModality; $response = $client->generativeModel('gemini-2.5-flash-preview-tts')->withGenerationConfig( generationConfig: new GenerationConfig( responseModalities: [ResponseModality::AUDIO], speechConfig: new SpeechConfig( voiceConfig: new VoiceConfig( new PrebuiltVoiceConfig(voiceName: 'Kore') ), ) ) )->generateContent("Say: Hello world"); // The response contains base64 encoded audio $audioData = $response->parts()[0]->inlineData->data;

Multi speaker

use Gemini\Data\GenerationConfig; use Gemini\Data\SpeechConfig; use Gemini\Data\MultiSpeakerVoiceConfig; use Gemini\Data\PrebuiltVoiceConfig; use Gemini\Data\SpeakerVoiceConfig; use Gemini\Data\VoiceConfig; use Gemini\Enums\ResponseModality; $response = $client->generativeModel('gemini-2.5-flash-preview-tts')->withGenerationConfig( generationConfig: new GenerationConfig( responseModalities: [ResponseModality::AUDIO], speechConfig: new SpeechConfig( multiSpeakerVoiceConfig: new MultiSpeakerVoiceConfig([ new SpeakerVoiceConfig( speaker: 'Joe', voiceConfig: new VoiceConfig( new PrebuiltVoiceConfig('Kore'), ) ), new SpeakerVoiceConfig( speaker: 'Jane', voiceConfig: new VoiceConfig( new PrebuiltVoiceConfig('Puck'), ) ) ]), languageCode: 'en-GB' ) ) )->generateContent("TTS the following conversation between Joe and Jane:\nJoe: How's it going today Jane?\nJane: Not too bad, how about you?"); // The response contains base64 encoded audio $audioData = $response->parts()[0]->inlineData->data;

Thinking Mode

For models that support thinking mode (like Gemini 2.0), you can configure the model to show its reasoning process. This is useful for complex problem-solving and understanding how the model arrives at its answers.

use Gemini\Data\GenerationConfig; use Gemini\Data\ThinkingConfig; $response = $client ->generativeModel(model: 'gemini-2.0-flash-thinking-exp') ->withGenerationConfig( new GenerationConfig( thinkingConfig: new ThinkingConfig( includeThoughts: true, thinkingBudget: 1024 ) ) ) ->generateContent('Solve this logic puzzle: If all Bloops are Razzies and all Razzies are Lazzies, are all Bloops definitely Lazzies?'); // Access the model's thoughts and final answer foreach ($response->candidates[0]->content->parts as $part) { if ($part->thought === true) { // This part contains the model's thinking process echo "Model's thinking: " . $part->text . "\n\n"; } else if ($part->text !== null) { // This is the final answer echo "Final answer: " . $part->text . "\n"; } }

Count tokens

When using long prompts, it might be useful to count tokens before sending any content to the model.

$response = $client ->generativeModel(model: 'gemini-2.0-flash') ->countTokens('Write a story about a magic backpack.'); echo $response->totalTokens; // 9

Configuration

Every prompt you send to the model includes parameter values that control how the model generates a response. The model can generate different results for different parameter values. Learn more about model parameters.

Also, you can use safety settings to adjust the likelihood of getting responses that may be considered harmful. By default, safety settings block content with medium and/or high probability of being unsafe content across all dimensions. Learn more about safety settings.

When using tools like GoogleMaps, you may also provide additional configuration via ToolConfig, such as RetrievalConfig for geographical context.

use Gemini\Data\GenerationConfig; use Gemini\Enums\HarmBlockThreshold; use Gemini\Data\SafetySetting; use Gemini\Enums\HarmCategory; $safetySettingDangerousContent = new SafetySetting( category: HarmCategory::HARM_CATEGORY_DANGEROUS_CONTENT, threshold: HarmBlockThreshold::BLOCK_ONLY_HIGH ); $safetySettingHateSpeech = new SafetySetting( category: HarmCategory::HARM_CATEGORY_HATE_SPEECH, threshold: HarmBlockThreshold::BLOCK_ONLY_HIGH ); $generationConfig = new GenerationConfig( stopSequences: [ 'Title', ], maxOutputTokens: 800, temperature: 1, topP: 0.8, topK: 10 ); $generativeModel = $client ->generativeModel(model: 'gemini-2.0-flash') ->withSafetySetting($safetySettingDangerousContent) ->withSafetySetting($safetySettingHateSpeech) ->withGenerationConfig($generationConfig) ->generateContent('Write a story about a magic backpack.');

File Management

The File API lets you store up to 20GB of files per project, with a per-file maximum size of 2GB. Files are stored for 48 hours and can be accessed in API calls.

File Upload

To reference larger files and videos with various prompts, upload them to Gemini storage.

use Gemini\Enums\FileState; use Gemini\Enums\MimeType; $files = $client->files(); echo "Uploading\n"; $meta = $files->upload( filename: 'video.mp4', mimeType: MimeType::VIDEO_MP4, displayName: 'Video' ); echo "Processing"; do { echo "."; sleep(2); $meta = $files->metadataGet($meta->uri); } while (!$meta->state->complete()); echo "\n"; if ($meta->state == FileState::Failed) { die("Upload failed:\n" . json_encode($meta->toArray(), JSON_PRETTY_PRINT)); } echo "Processing complete\n" . json_encode($meta->toArray(), JSON_PRETTY_PRINT); echo "\n{$meta->uri}";

List Files

List all uploaded files in your project.

$response = $client->files()->list(pageSize: 10); foreach ($response->files as $file) { echo "Name: {$file->name}\n"; echo "Display Name: {$file->displayName}\n"; echo "Size: {$file->sizeBytes} bytes\n"; echo "MIME Type: {$file->mimeType}\n"; echo "State: {$file->state->value}\n"; echo "---\n"; } // Get next page if available if ($response->nextPageToken) { $nextPage = $client->files()->list(pageSize: 10, nextPageToken: $response->nextPageToken); }

Get File Metadata

Retrieve metadata for a specific file.

$meta = $client->files()->metadataGet('abc123'); // or use the full URI $meta = $client->files()->metadataGet($file->uri); echo "File: {$meta->displayName}\n"; echo "State: {$meta->state->value}\n"; echo "Size: {$meta->sizeBytes} bytes\n";

Delete File

Delete a file from Gemini storage.

$client->files()->delete('files/abc123'); // or use the full URI $client->files()->delete($file->uri);

Cached Content

Context caching allows you to save and reuse precomputed input tokens for frequently used content. This reduces costs and latency for requests with large amounts of shared context.

Create Cached Content

Cache content that you'll reuse across multiple requests.

use Gemini\Data\Content; $cachedContent = $client->cachedContents()->create( model: 'gemini-2.0-flash', systemInstruction: Content::parse('You are an expert PHP developer.'), parts: [ 'This is a large codebase...', 'File 1 contents...', 'File 2 contents...' ], ttl: '3600s', // Cache for 1 hour displayName: 'PHP Codebase Cache' ); echo "Cached content created: {$cachedContent->name}\n";

List Cached Content

List all cached content in your project.

$response = $client->cachedContents()->list(pageSize: 10); foreach ($response->cachedContents as $cached) { echo "Name: {$cached->name}\n"; echo "Display Name: {$cached->displayName}\n"; echo "Model: {$cached->model}\n"; echo "Expires: {$cached->expireTime}\n"; echo "---\n"; }

Get Cached Content

Retrieve a specific cached content by name.

$cached = $client->cachedContents()->retrieve('cachedContents/abc123'); echo "Model: {$cached->model}\n"; echo "Created: {$cached->createTime}\n"; echo "Expires: {$cached->expireTime}\n";

Update Cached Content

Update the expiration time of cached content.

// Extend by TTL $updated = $client->cachedContents()->update( name: 'cachedContents/abc123', ttl: '7200s' // Extend by 2 hours ); // Or set absolute expiration time $updated = $client->cachedContents()->update( name: 'cachedContents/abc123', expireTime: '2024-12-31T23:59:59Z' );

Delete Cached Content

Delete cached content when no longer needed.

$client->cachedContents()->delete('cachedContents/abc123');

Use Cached Content

Use cached content in your requests to save tokens and reduce latency.

$response = $client ->generativeModel(model: 'gemini-2.0-flash') ->withCachedContent('cachedContents/abc123') ->generateContent('Explain the main function in this codebase'); echo $response->text(); // Check token usage echo "Cached tokens used: {$response->usageMetadata->cachedContentTokenCount}\n"; echo "New tokens used: {$response->usageMetadata->promptTokenCount}\n";

File Search Stores

File search allows you to search files that were uploaded through the File API.

Create File Search Store

Create a file search store.

use Gemini\Enums\FileState; use Gemini\Enums\MimeType; use Gemini\Enums\Schema; use Gemini\Enums\DataType; $files = $client->files(); echo "Uploading\n"; $meta = $files->upload( filename: 'document.pdf', mimeType: MimeType::APPLICATION_PDF, displayName: 'Document for search' ); echo "Processing"; do { echo "."; sleep(2); $meta = $files->metadataGet($meta->uri); } while (! $meta->state->complete()); echo "\n"; if ($meta->state == FileState::Failed) { die("Upload failed:\n".json_encode($meta->toArray(), JSON_PRETTY_PRINT)); } $fileSearchStore = $client->fileSearchStores()->create( displayName: 'My Search Store', ); echo "File search store created: {$fileSearchStore->name}\n";

Get File Search Store

Get a specific file search store by name.

$fileSearchStore = $client->fileSearchStores()->get('fileSearchStores/my-search-store'); echo "Name: {$fileSearchStore->name}\n"; echo "Display Name: {$fileSearchStore->displayName}\n";

List File Search Stores

List all file search stores.

$response = $client->fileSearchStores()->list(pageSize: 10); foreach ($response->fileSearchStores as $fileSearchStore) { echo "Name: {$fileSearchStore->name}\n"; echo "Display Name: {$fileSearchStore->displayName}\n"; echo "--- \n"; }

Delete File Search Store

Delete a file search store by name.

$client->fileSearchStores()->delete('fileSearchStores/my-search-store');

File Search Documents

Upload File Search Document

Upload a local file directly to a file search store.

use Gemini\Enums\MimeType; $response = $client->fileSearchStores()->upload( storeName: 'fileSearchStores/my-search-store', filename: 'document2.pdf', mimeType: MimeType::APPLICATION_PDF, displayName: 'Another Search Document' ); echo "File search document upload operation: {$response->name}\n";

Get File Search Document

Get a specific file search document by name.

$fileSearchDocument = $client->fileSearchStores()->getDocument('fileSearchStores/my-search-store/fileSearchDocuments/my-document'); echo "Name: {$fileSearchDocument->name}\n"; echo "Display Name: {$fileSearchDocument->displayName}\n";

List File Search Documents

List all file search documents within a store.

$response = $client->fileSearchStores()->listDocuments(storeName: 'fileSearchStores/my-search-store', pageSize: 10); foreach ($response->documents as $fileSearchDocument) { echo "Name: {$fileSearchDocument->name}\n"; echo "Display Name: {$fileSearchDocument->displayName}\n"; echo "Create Time: {$fileSearchDocument->createTime}\n"; echo "Update Time: {$fileSearchDocument->updateTime}\n"; echo "--- \n"; }

Delete File Search Document

Delete a file search document by name.

$client->fileSearchStores()->deleteDocument('fileSearchStores/my-search-store/fileSearchDocuments/my-document');

Embedding Resource

Embedding is a technique used to represent information as a list of floating point numbers in an array. With Gemini, you can represent text (words, sentences, and blocks of text) in a vectorized form, making it easier to compare and contrast embeddings. For example, two texts that share a similar subject matter or sentiment should have similar embeddings, which can be identified through mathematical comparison techniques such as cosine similarity.

Use the text-embedding-004 model with either embedContents or batchEmbedContents:

$response = $client ->embeddingModel('text-embedding-004') ->embedContent("Write a story about a magic backpack."); print_r($response->embedding->values); //[ // [0] => 0.008624583 // [1] => -0.030451821 // [2] => -0.042496547 // [3] => -0.029230341 // [4] => 0.05486475 // [5] => 0.006694871 // [6] => 0.004025645 // [7] => -0.007294857 // [8] => 0.0057651913 // ... //]

$response = $client ->embeddingModel('text-embedding-004') ->batchEmbedContents("Bu bir testtir", "Deneme123"); print_r($response->embeddings); // [ // [0] => Gemini\Data\ContentEmbedding Object // ( // [values] => Array // ( // [0] => 0.035855837 // [1] => -0.049537655 // [2] => -0.06834927 // [3] => -0.010445258 // [4] => 0.044641383 // [5] => 0.031156342 // [6] => -0.007810312 // [7] => -0.0106866965 // ... // ), // ), // [1] => Gemini\Data\ContentEmbedding Object // ( // [values] => Array // ( // [0] => 0.035855837 // [1] => -0.049537655 // [2] => -0.06834927 // [3] => -0.010445258 // [4] => 0.044641383 // [5] => 0.031156342 // [6] => -0.007810312 // [7] => -0.0106866965 // ... // ), // ), // ]

Models

We recommend checking Google documentation for the latest supported models.

List Models

Use list models to see the available Gemini models programmatically:

-

pageSize (optional): The maximum number of Models to return (per page).

If unspecified, 50 models will be returned per page. This method returns at most 1000 models per page, even if you pass a larger pageSize. -

nextPageToken (optional): A page token, received from a previous models.list call.

Provide the pageToken returned by one request as an argument to the next request to retrieve the next page. When paginating, all other parameters provided to models.list must match the call that provided the page token.

$response = $client->models()->list(pageSize: 3, nextPageToken: 'ChFtb2RlbHMvZ2VtaW5pLXBybw=='); $response->models; //[ // [0] => Gemini\Data\Model Object // ( // [name] => models/gemini-2.0-flash // [version] => 2.0 // [displayName] => Gemini 2.0 Flash // [description] => Gemini 2.0 Flash // ... // ) // [1] => Gemini\Data\Model Object // ( // [name] => models/gemini-2.5-pro-preview-05-06 // [version] => 2.5-preview-05-06 // [displayName] => Gemini 2.5 Pro Preview 05-06 // [description] => Preview release (May 6th, 2025) of Gemini 2.5 Pro // ... // ) // [2] => Gemini\Data\Model Object // ( // [name] => models/text-embedding-004 // [version] => 004 // [displayName] => Text Embedding 004 // [description] => Obtain a distributed representation of a text. // ... // ) //]

$response->nextPageToken // Chltb2RlbHMvZ2VtaW5pLTEuMC1wcm8tMDAx

Get Model

Get information about a model, such as version, display name, input token limit, etc.

$response = $client->models()->retrieve('models/gemini-2.5-pro-preview-05-06'); $response->model; //Gemini\Data\Model Object //( // [name] => models/gemini-2.5-pro-preview-05-06 // [version] => 2.5-preview-05-06 // [displayName] => Gemini 2.5 Pro Preview 05-06 // [description] => Preview release (May 6th, 2025) of Gemini 2.5 Pro // ... //)

Troubleshooting

Timeout

You may run into a timeout when sending requests to the API. The default timeout depends on the HTTP client used.

You can increase the timeout by configuring the HTTP client and passing in to the factory.

This example illustrates how to increase the timeout using Guzzle.

Gemini::factory() ->withApiKey($apiKey) ->withHttpClient(new \GuzzleHttp\Client(['timeout' => $timeout])) ->make();

Testing

The package provides a fake implementation of the Gemini\Client class that allows you to fake the API responses.

To test your code ensure you swap the Gemini\Client class with the Gemini\Testing\ClientFake class in your test case.

The fake responses are returned in the order they are provided while creating the fake client.

All responses are having a fake() method that allows you to easily create a response object by only providing the parameters relevant for your test case.

use Gemini\Testing\ClientFake; use Gemini\Responses\GenerativeModel\GenerateContentResponse; $client = new ClientFake([ GenerateContentResponse::fake([ 'candidates' => [ [ 'content' => [ 'parts' => [ [ 'text' => 'success', ], ], ], ], ], ]), ]); $result = $fake->generativeModel(model: 'gemini-2.0-flash')->generateContent('test'); expect($result->text())->toBe('success');

In case of a streamed response you can optionally provide a resource holding the fake response data.

use Gemini\Testing\ClientFake; use Gemini\Responses\GenerativeModel\GenerateContentResponse; $client = new ClientFake([ GenerateContentResponse::fakeStream(), ]); $result = $client->generativeModel(model: 'gemini-2.0-flash')->streamGenerateContent('Hello'); expect($response->getIterator()->current()) ->text()->toBe('In the bustling city of Aethelwood, where the cobblestone streets whispered');

After the requests have been sent there are various methods to ensure that the expected requests were sent:

// assert list models request was sent use Gemini\Resources\GenerativeModel; use Gemini\Resources\Models; $fake->models()->assertSent(callback: function ($method) { return $method === 'list'; }); // or $fake->assertSent(resource: Models::class, callback: function ($method) { return $method === 'list'; }); $fake->geminiPro()->assertSent(function (string $method, array $parameters) { return $method === 'generateContent' && $parameters[0] === 'Hello'; }); // or $fake->assertSent(resource: GenerativeModel::class, model: 'gemini-2.0-flash', callback: function (string $method, array $parameters) { return $method === 'generateContent' && $parameters[0] === 'Hello'; }); // assert 2 generative model requests were sent $client->assertSent(resource: GenerativeModel::class, model: 'gemini-2.0-flash', callback: 2); // or $client->generativeModel(model: 'gemini-2.0-flash')->assertSent(2); // assert no generative model requests were sent $client->assertNotSent(resource: GenerativeModel::class, model: 'gemini-2.0-flash'); // or $client->generativeModel(model: 'gemini-2.0-flash')->assertNotSent(); // assert no requests were sent $client->assertNothingSent();

To write tests expecting the API request to fail you can provide a Throwable object as the response.

use Gemini\Testing\ClientFake; use Gemini\Exceptions\ErrorException; $client = new ClientFake([ new ErrorException([ 'message' => 'The model `gemini-basic` does not exist', 'status' => 'INVALID_ARGUMENT', 'code' => 400, ]), ]); // the `ErrorException` will be thrown $client->generativeModel(model: 'gemini-2.0-flash')->generateContent('test');