andrey-helldar / stable-diffusion-ui-samplers-generator

Sampler generator for Easy Diffusion UI

Package info

github.com/andrey-helldar/easy-diffusion-ui-samplers-generator

pkg:composer/andrey-helldar/stable-diffusion-ui-samplers-generator

Requires

- php: ^8.1

- ext-imagick: *

- ext-json: *

- archtechx/enums: ^0.3.1

- dragon-code/simple-dto: ^2.7

- dragon-code/support: ^6.8

- guzzlehttp/guzzle: ^7.5

- illuminate/console: ^9.45

- intervention/image: ^2.7

- nesbot/carbon: ^2.64

- symfony/console: ^6.2

- symfony/dom-crawler: ^6.2

Requires (Dev)

- symfony/var-dumper: ^6.2

README

Installation

First you need to download and run Easy Diffusion.

Next, make sure you have Composer, PHP 8.1 or higher and Git installed on your computer.

Next, you may create a new Samplers Generator project via the Composer create-project command:

composer create-project andrey-helldar/easy-diffusion-ui-samplers-generator easy-diffusion-ui-samplers-generator

Or you can download this repository:

git clone git@github.com:andrey-helldar/easy-diffusion-ui-samplers-generator.git

Next, go to the project folder and install the dependencies:

cd ./easy-diffusion-ui-samplers-generator

composer install

Configuration

The project has few static settings - these are the number of steps for generating images and sizes.

Configuration files are located in the config folder.

Usage

First you need to run the neural network python script See more: https://github.com/cmdr2/stable-diffusion-ui/wiki/How-to-Use

Sampler generation for all available models

To do this, you need to call the bin/sampler models console command, passing it the required parameter --prompt.

For example:

bin/sampler models --prompt "a photograph of an astronaut riding a horse"

If you want to generate a sampler table for a previously generated image, then you need to also pass the --seed parameter with this value when invoking the console command.

For example:

bin/sampler models --prompt "a photograph of an astronaut riding a horse" --seed 2699388

Available options

---prompt- Query string for image generation. It's a string.--negative-prompt- Exclusion words for query generation. It's a string.--tags- Image generation modifiers. It's array.--fix-faces- Enable fix incorrect faces and eyes via GFPGANv1.3. It's a boolean.--path- Path to save the generated samples. By default, in the./buildsubfolder inside the current directory.--seed- Seed ID of an early generated image.--output-format- Sets the file export format: jpeg or png. By default, jpeg.--output-quality- Specifies the percentage quality of the output image. By default, 75.

Sampler generation for one model

To do this, you need to call the bin/sampler model console command, passing it the required parameters --prompt and --model.

For example:

bin/sampler model --prompt "a photograph of an astronaut riding a horse" --model "sd-v1-4"

If you want to generate a sampler table for a previously generated image, then you need to also pass the --seed parameter with this value when invoking the console command.

For example:

bin/sampler models --prompt "a photograph of an astronaut riding a horse" --model "sd-v1-4" --seed 2699388

Available options

---model- Model for generating samples. It's a string.---prompt- Query string for image generation. It's a string.--negative-prompt- Exclusion words for query generation. It's a string.--tags- Image generation modifiers. It's array.--fix-faces- Enable fix incorrect faces and eyes via GFPGANv1.3. It's a boolean.--path- Path to save the generated samples. By default, in the./buildsubfolder inside the current directory.--seed- Seed ID of an early generated image.--output-format- Sets the file export format: jpeg or png. By default, jpeg.--output-quality- Specifies the percentage quality of the output image. By default, 75.

Sampler generation for all models based on configuration files

You can also copy configurations from the Stable Diffusion UI web interface to the clipboard, after which, using any file editor, you can save these configurations to any folder on your computer.

After you save as many configuration files as you need in a folder, you can call the bin/samplers settings --path command, passing it the path to that folder:

bin/samplers settings --path /home/user/samplers # or for Windows bin/samplers settings --path "D:\exports\Samplers"

When the command is run, the script will find all json files in the root of the specified folder (without recursive search), check them for correct filling (incorrect files will be skipped, there will be no errors), and start generating samplers for all models available to the neural network for each of the files.

The sampler table will be generated by the Seed ID taken from the configuration file.

For example, output info:

INFO: Run: woman.json

INFO aloeVeraSSimpMaker3K_simpMaker3K1

20/20 [============================] 100%

Storing ......................................... 113ms DONE

Elapsed Time ........................... 2 minute 47 seconds

INFO sd-v1-4

20/20 [============================] 100%

Storing ......................................... 113ms DONE

Elapsed Time ............................ 3 minute 7 seconds

INFO: Run: man.json

INFO aloeVeraSSimpMaker3K_simpMaker3K1

20/20 [============================] 100%

Storing ......................................... 113ms DONE

Elapsed Time ........................... 2 minute 12 seconds

INFO sd-v1-4

20/20 [============================] 100%

Storing ......................................... 113ms DONE

Elapsed Time ........................... 3 minute 12 seconds

Output Path .......... /home/user/samplers/2022-12-26_21-06-32

Elapsed Time ........................... 11 minutes 18 seconds

The target folder will contain the collected sampler files (jpeg or png), as well as a set of configurations for them.

For example:

/home/user/samplers/woman.json

/home/user/samplers/2022-12-26_21-06-32/woman/aloeVeraSSimpMaker3K_simpMaker3K1__vae-ft-mse-840000-ema-pruned.png

/home/user/samplers/2022-12-26_21-06-32/woman/aloeVeraSSimpMaker3K_simpMaker3K1__vae-ft-mse-840000-ema-pruned.json

/home/user/samplers/2022-12-26_21-06-32/woman/sd-v1-4__vae-ft-mse-840000-ema-pruned.png

/home/user/samplers/2022-12-26_21-06-32/woman/sd-v1-4__vae-ft-mse-840000-ema-pruned.json

/home/user/samplers/man.json

/home/user/samplers/2022-12-26_21-06-32/man/aloeVeraSSimpMaker3K_simpMaker3K1__vae-ft-mse-840000-ema-pruned.png

/home/user/samplers/2022-12-26_21-06-32/man/aloeVeraSSimpMaker3K_simpMaker3K1__vae-ft-mse-840000-ema-pruned.json

/home/user/samplers/2022-12-26_21-06-32/man/sd-v1-4__vae-ft-mse-840000-ema-pruned.png

/home/user/samplers/2022-12-26_21-06-32/man/sd-v1-4__vae-ft-mse-840000-ema-pruned.json

Available options

--path- Path to save the generated samples. By default, in the./buildsubfolder inside the current directory.--output-format- Sets the file export format: jpeg or png. By default, jpeg.--output-quality- Specifies the percentage quality of the output image. By default, 75.

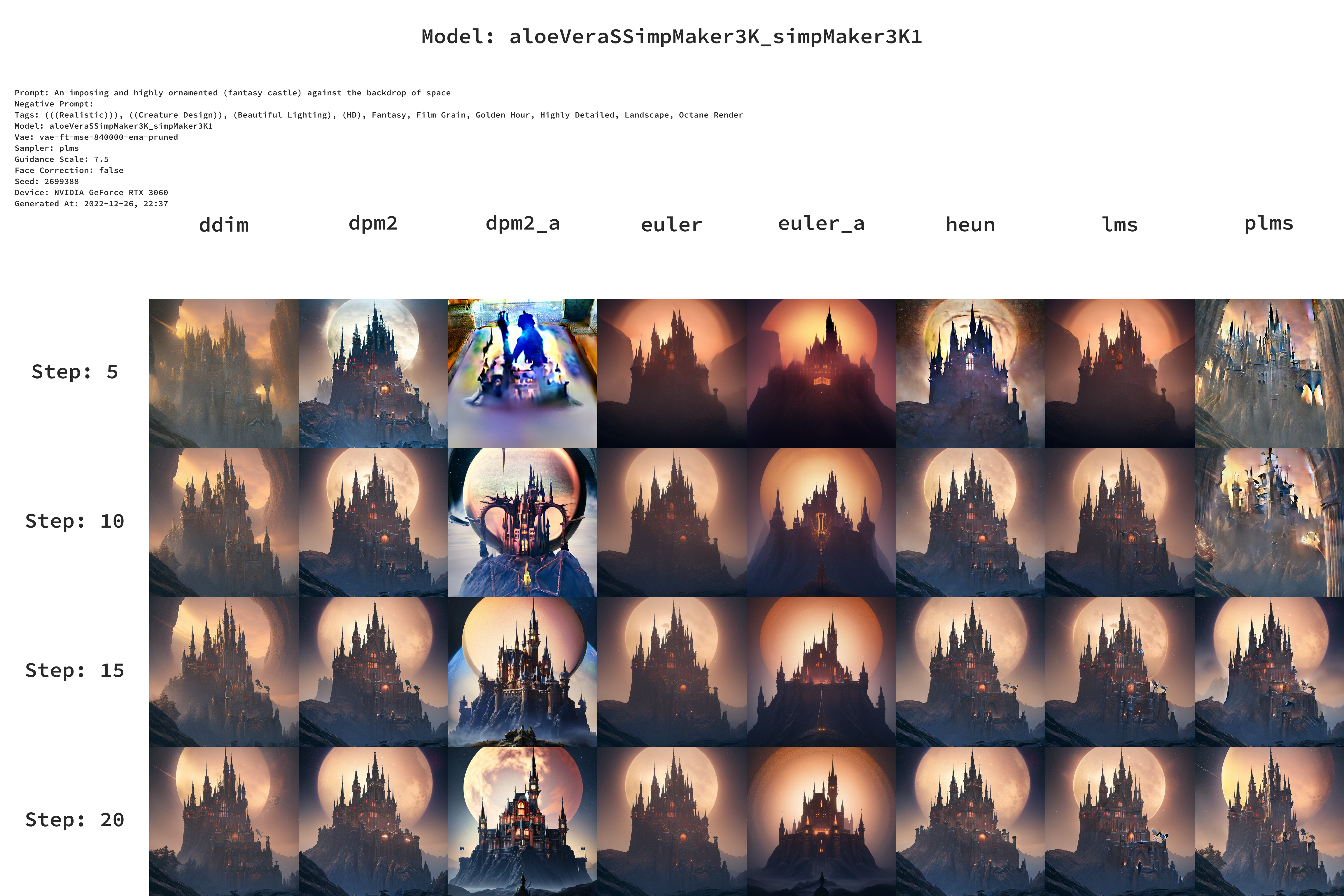

Example

Source

Sampler

Config

The last used configuration for the model is saved to the file

{

"numOutputsTotal": 1,

"seed": 2699388,

"reqBody": {

"prompt": "An imposing and highly ornamented (fantasy castle) against the backdrop of space, (((Realistic))), ((Creature Design)), (Beautiful Lighting), (HD), Fantasy, Film Grain, Golden Hour, Highly Detailed, Landscape, Octane Render",

"negative_prompt": "",

"active_tags": [

"(((Realistic)))",

"((Creature Design))",

"(Beautiful Lighting)",

"(HD)",

"Fantasy",

"Film Grain",

"Golden Hour",

"Highly Detailed",

"Landscape",

"Octane Render"

],

"width": 512,

"height": 512,

"seed": 2699388,

"num_inference_steps": 20,

"guidance_scale": 7.5,

"use_face_correction": false,

"sampler": "plms",

"use_stable_diffusion_model": "aloeVeraSSimpMaker3K_simpMaker3K1",

"use_vae_model": "vae-ft-mse-840000-ema-pruned",

"use_hypernetwork_model": "",

"hypernetwork_strength": 1,

"num_outputs": 1,

"stream_image_progress": false,

"show_only_filtered_image": true,

"output_format": "png"

}

}

FAQ

Q: Why is it not written in python?

A: I was interested in writing a pet project in one evening and I don't know python 😁

Q: What models do you use?

A: For various purposes, I use the following models:

- Models:

- https://civitai.com/?types=Checkpoint

- https://civitai.com/models/1102/synthwavepunk (version: 3)

- https://civitai.com/models/1259/elldreths-og-4060-mix (version: 1)

- https://civitai.com/models/1116/rpg (version: 1)

- https://civitai.com/models/1186/novel-inkpunk-f222 (version: 1)

- https://civitai.com/models/5/elden-ring-style (version: 3)

- https://civitai.com/models/1377/sidon-architectural-model (version: 1)

- https://rentry.org/sdmodels

- https://civitai.com/?types=Checkpoint

- VAE

License

This package is licensed under the MIT License.