axyr / laravel-langfuse

Langfuse PHP SDK for Laravel

Requires

- php: ^8.2

- illuminate/contracts: ^12.0|^13.0

- illuminate/http: ^12.0|^13.0

- illuminate/support: ^12.0|^13.0

Requires (Dev)

- larastan/larastan: ^3.0

- laravel/pint: ^1.0

- orchestra/testbench: ^10.0|^11.0

- pestphp/pest: ^4.0

- phpmd/phpmd: ^2.0

- prism-php/prism: ^0.100

Suggests

- laravel/ai: Required for automatic Laravel AI SDK tracing (^0.4)

- neuron-core/neuron-ai: Required for automatic Neuron AI agent tracing (^3.0)

- prism-php/prism: Required for automatic Prism LLM call tracing (^0.100)

README

Laravel Langfuse

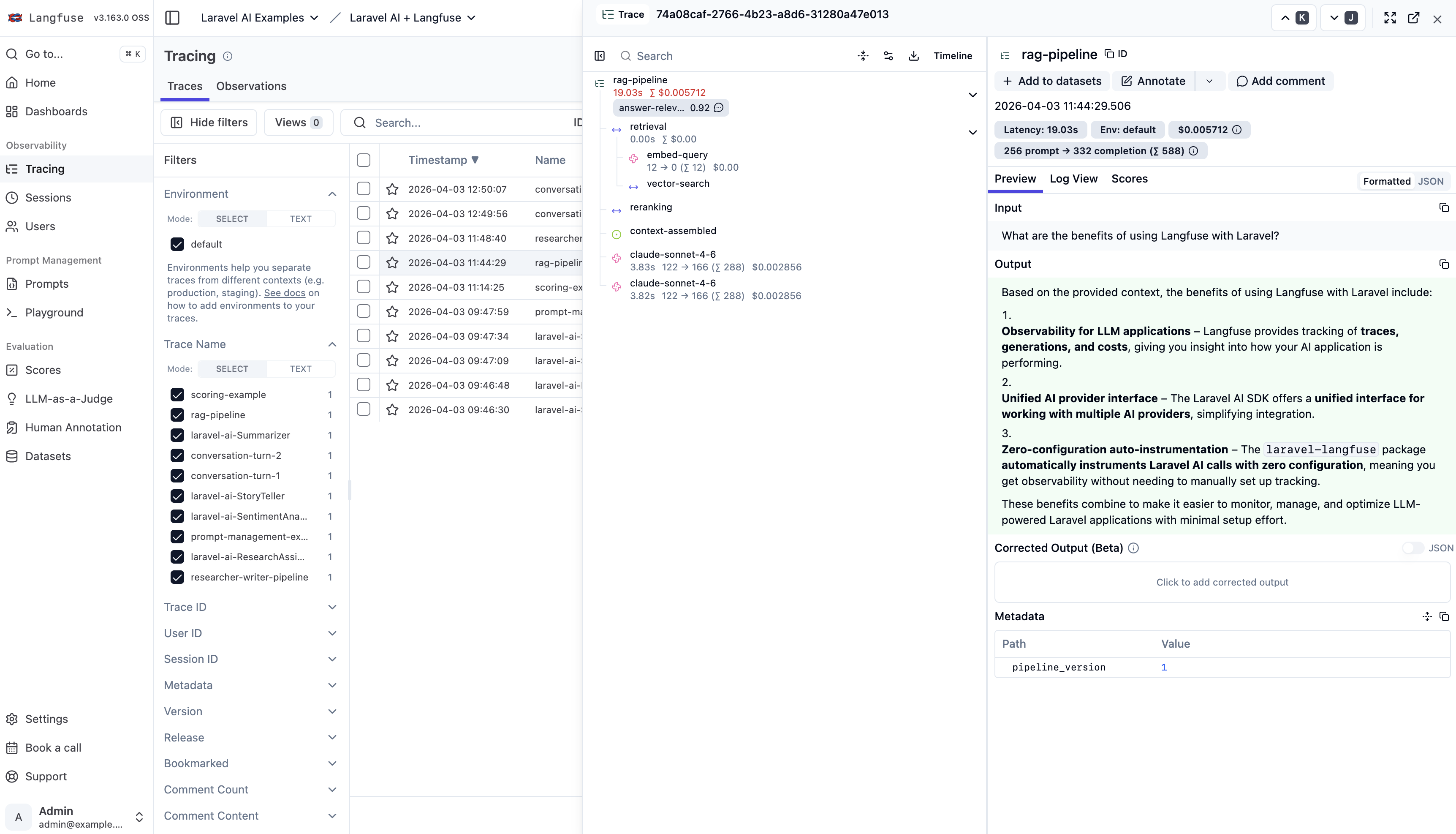

Langfuse is an open-source observability platform for LLM applications. It gives you a dashboard to trace every LLM call, track token usage and costs, manage prompt versions, and evaluate output quality - all in one place. It's self-hostable or available as a managed cloud service.

This package connects your Laravel app to Langfuse. Send traces, generations, scores, and prompts with a clean, idiomatic API - or let the auto-instrumentation do it for you.

use Axyr\Langfuse\LangfuseFacade as Langfuse; $trace = Langfuse::trace(new TraceBody(name: 'chat-request')); $generation = $trace->generation(new GenerationBody( name: 'chat', model: 'gpt-4', input: [['role' => 'user', 'content' => 'Hello!']], )); // After the LLM responds: $generation->end( output: 'Hi there!', usage: new Usage(input: 12, output: 85, total: 97), );

Events are batched and flushed automatically. Zero-code auto-instrumentation is available for Laravel AI, Prism, and Neuron AI.

Features

- Full observability - traces, spans, generations, events, and scores with automatic parent-child nesting

- Prompt management - fetch, cache, compile, create, and list prompts with stale-while-revalidate caching

- Auto-instrumentation - zero-code tracing for Prism, Laravel AI, and Neuron AI

- Automatic batching - events queued and sent in batches, with optional async dispatch via Laravel queues

- Production-ready - Octane compatible, graceful degradation, auto-flush on shutdown, testing fakes

Installation

Requires PHP 8.2+ and Laravel 12 or 13.

Langfuse Compatibility: This package is compatible with both Langfuse v2 and v3. For self-hosted deployments, v3 introduces an asynchronous architecture with improved reliability and performance.

composer require axyr/laravel-langfuse

Add your Langfuse credentials to .env:

LANGFUSE_PUBLIC_KEY=pk-lf-... LANGFUSE_SECRET_KEY=sk-lf-...

Optionally publish the configuration file:

php artisan vendor:publish --tag=langfuse-config

Examples

Working example projects for each integration:

- Laravel AI + Langfuse - agents, tools, streaming, and scoring with the official Laravel AI SDK

- Prism + Langfuse - text, structured output, and streaming with Prism

- Neuron AI + Langfuse - agent workflows with Neuron AI

Documentation

Full documentation in the docs/ directory:

- Configuration - env vars, config publishing

- Tracing - traces, updating, nesting observations

- Generations - LLM generation tracking, usage and cost

- Spans and Events - non-LLM work, event logging

- Scores - numeric, boolean, categorical scores

- Prompt Management - fetch, cache, compile, create

- Integrations - Prism, Laravel AI, Neuron AI

- Middleware - request trace context

- Batching and Flushing - flush control, queued dispatch

- Testing - fakes and assertions

- Architecture - system diagram, Octane compatibility

- Troubleshooting - Langfuse v3 compatibility, common issues

Contributing

Contributions welcome. Open an issue first to discuss what you'd like to change.

composer test # Run tests composer pint # Fix code style

License

MIT

Author

Built by Martijn van Nieuwenhoven - Laravel developer specializing in AI integrations and observability tooling.